InstructFX2FX:

Iterative Audio FX Refinement

via LLM + CLAP Optimization

CNMAT

Research Group 2 Final Presentation

2026/04/29

Text-to-Preset Generation with Human-in-the-Loop Optimization

Group 2: Song-Ze Yu, Charlotte Zhang, Yuxuan Cai, Milan Liessens Dujardin

CNMAT, UC Berkeley

CNMAT, UC Berkeley

CNMATResearch Group 22026/04/29

The Problem: Sequential FX Refinement

Given existing parameters P, how can we use natural language instructions

{I1, I2, ...}

to make the sound better fit the user's needs?

- Audio engineers iteratively refine FX parameters

- Each instruction builds on existing state, not from scratch

- No existing system handles multi-turn sequential refinement

Raw Audio + FX Params

Prompt 1: "make it warm"

Refined Params P'

Prompt 2: "too dark, brighter"

Refined Params P''

...

CNMATResearch Group 22026/04/29

Related Works

Text2FX

CLAP-guided optimization of FX parameters from text

Single prompt only

Single prompt only

LLM2FX

LLM generates FX parameters from descriptors

Single prompt, no optimization

Single prompt, no optimization

LLM2FX-tools (ICLR 2026)

LLM tool calls to select & order FX chain

Different problem: reverse engineering

Different problem: reverse engineering

FxSearcher

Bayesian optimization over CLAP for non-differentiable VST/AU plugins

Gradient-free, single prompt

Gradient-free, single prompt

Gap: No existing system combines LLM intelligence with gradient-based refinement for sequential prompting

CNMATResearch Group 22026/04/29

Our Hypothesis

LLM = The Brain

- Selects which FX to apply

- Orders the signal chain

- Generates reasonable initial parameters

- Audio engineering domain knowledge

+

CLAP = Ears + Hands

- Perceives audio in embedding space

- Gradient descent refines parameters

- Moves sound toward target semantics

- Perceptual loss, not numeric scoring

Combined: LLM provides the starting point, CLAP provides the refinement direction

CNMATResearch Group 22026/04/29

Introducing InstructFX2FX

Iterative text-to-preset refinement with persistent session state

InstructFX2FX (Ours)

Multi-turn refinement of audio FX presets: an LLM picks the FX chain and sensible starting parameters;

CLAP-guided optimization adjusts the sound to match natural language step by step —

built for sequential prompting and persistent session state, unlike pipelines that assume each text query is isolated (no accumulating FX preset).

- LLM selects & orders effects; differentiable FX use gradient descent

- Other effects use Bayesian optimization (same stack as FxSearcher-style search)

- Session keeps accumulated parameters across user instructions

CNMATResearch Group 22026/04/29

Current Harness

Input

Dry Audio + User Instruction + Existing Params P

Layer 1

FX Selector Agent

Default: Gemini 3.1 Pro

Orchestrator

Merge Session FX + New FX

carry prior chain forward

Layer 2

Parser

reuse_all / initialize_all / reuse_and_initialize

Layer 3a

CLAP GD

EQ, Reverb

Layer 3b

Bayesian BO

Comp, Dist, Delay, PitchShift, Bitcrush

Output

Updated Params + Rendered Audio

Session Memory

history[] + current_params{}

church -> [rev]

add grit -> [rev, dist]

Layer 1 reads history.

Later turns reuse stored params.

Later turns reuse stored params.

Execution Rules

Turn 1 must pick at least one FX

Later turns can return []

Case 2 init only

Case 1 / 3 DASP first, then BO

CNMATResearch Group 22026/04/29

Layer 1: FX Selector Agent

- Model is configurable; default is Gemini 3.1 Pro

- Each FX is exposed as an add_<fx> tool

- Tool call order = signal chain order

- Tools already in the session chain are hidden on refinement turns

Initial Turn

Empty history

Clean "pick the chain" prompt.

tool_choice="required"

tool_choice="required"

Input: "make it sound like a church"

Output: [add_rev]

Output: [add_rev]

Refinement Turn

History-aware add-only prompt

Sees prior prompts + current chain.

tool_choice="auto" so zero tool calls are allowed.

tool_choice="auto" so zero tool calls are allowed.

Current chain: [rev, dist]

Request: "too harsh, soften it"

Output: []

Request: "too harsh, soften it"

Output: []

Available Tools

add_eq

add_comp

add_rev

add_dist

add_delay

add_pitchshift

add_bitcrush

What Changed From Last Version

- Selector now reads session.history

- Can decide to add nothing and just re-tune

- Prevents double-adding an FX already in the chain

CNMATResearch Group 22026/04/29

Layer 2: Parser — Session-Aware Routing

The route now depends on whether the selected full chain already has params in the session

Case 1

All FX exist in session

Reuse existing params

Optimize

Case 2

No FX exist yet

LLM initialize all

Render once

Case 3

Mixed — some exist, some new

LLM init missing + merge

Optimize

Example: Case B from `tests/test_pipeline.py`

Turn 1: "make it sound like a church"

Full chain: [rev]Case 2 → initialize reverb, then render once

Turn 2: "add some grit"

Full chain: [rev, dist]Case 3 → reuse rev + init dist → optimize

Turn 3: "too harsh, soften it"

Full chain: [rev, dist]Case 1 → reuse both → re-optimize

Current issue: BO does not reliably reduce distortion.

CNMATResearch Group 22026/04/29

Layer 3: Dual-Track Optimization

CLAP Gradient Descent DASP

For differentiable effects: EQ, Reverb

Params

→

DASP FX

→

Audio

→

CLAP

→

Loss

←←← Backpropagation ←←←

- Adam optimizer, lr=0.01

- Inverse-sigmoid param transform [0,1]

- Configurable iterations (default 1000)

Bayesian Optimization Pedalboard

For non-differentiable effects: Comp, Dist, Delay, PitchShift, Bitcrush

Sample

→

Pedalboard

→

Audio

→

CLAP

→

Score

GP surrogate → LCB acquisition

- scikit-optimize (gp_minimize)

- LCB acquisition, kappa=5

- Early stopping (30 iters no improve)

Sequential pipeline: DASP output audio → feeds as input to Pedalboard stage

CNMATResearch Group 22026/04/29

Loss Functions in CLAP Embedding Space

Directional Loss

If text moves A -> B, audio should move in the same direction.

text_dir = emb(B) - emb(A)

audio_dir = emb(audio_t) - emb(audio_0)

text_dir = emb(B) - emb(A)

audio_dir = emb(audio_t) - emb(audio_0)

Semantic Similarity

Direct similarity to target text

L = 1 - cos(audio, target)

L = 1 - cos(audio, target)

Guided Semantic

Maximize target, minimize negative

L = 1 - cos(a,t+) + cos(a,t−)

t− = "harsh, distorted, muddy..."

L = 1 - cos(a,t+) + cos(a,t−)

t− = "harsh, distorted, muddy..."

CNMATResearch Group 22026/04/29

Demo

Live System Demonstration

Open Demo

Interactive: input audio + text prompt → optimized FX parameters

CNMATResearch Group 22026/04/29

Case Study: Case B

Routing works; distortion re-tuning is still weak.

Prompt 1

church

Prompt 2

add grit

Prompt 3

soften it

Turn 1 Result

["rev"]

Case 2 init only

Turn 2 Result

["rev", "dist"]

Case 3 add distortion

drive_db = 10.0

Turn 3 Result

["rev", "dist"]

Case 1 re-tune

distortion does not soften

drive_db stays 10.0

drive_db stays 10.0

What works

- Session carries the chain forward correctly

- Refinement turn adds no extra FX

- Parser lands on the reuse path

Main issue

- BO is not pushing distortion downward

- Movement happens mostly in reverb

- Bottleneck looks like objective / CLAP, not routing

CNMATResearch Group 22026/04/29

Evaluation Setup

Dataset: SocialFX

7 EQ descriptor words

warm (93)

bright (74)

soft (74)

loud (54)

harsh (39)

calm (26)

heavy (25)

Selected by: count ≥ 20 AND consistency ≥ 0.45

10 Directed Word Pairs (A → B)

warm→bright

bright→warm

heavy→calm

heavy→harsh

harsh→soft

harsh→calm

warm→heavy

soft→loud

calm→loud

loud→heavy

Metric: MMD

Maximum Mean Discrepancy

- 35-D DSP features (spectral, MFCC, temporal)

- Gaussian kernel, median bandwidth

- Lower = closer to GT distribution

Ground Truth Construction

Dry Audio

→

Apply EQA

→

Apply EQB

Sequential GT: Audio with params_A then params_B

50 random samples per word per instrument

50 random samples per word per instrument

CNMATResearch Group 22026/04/29

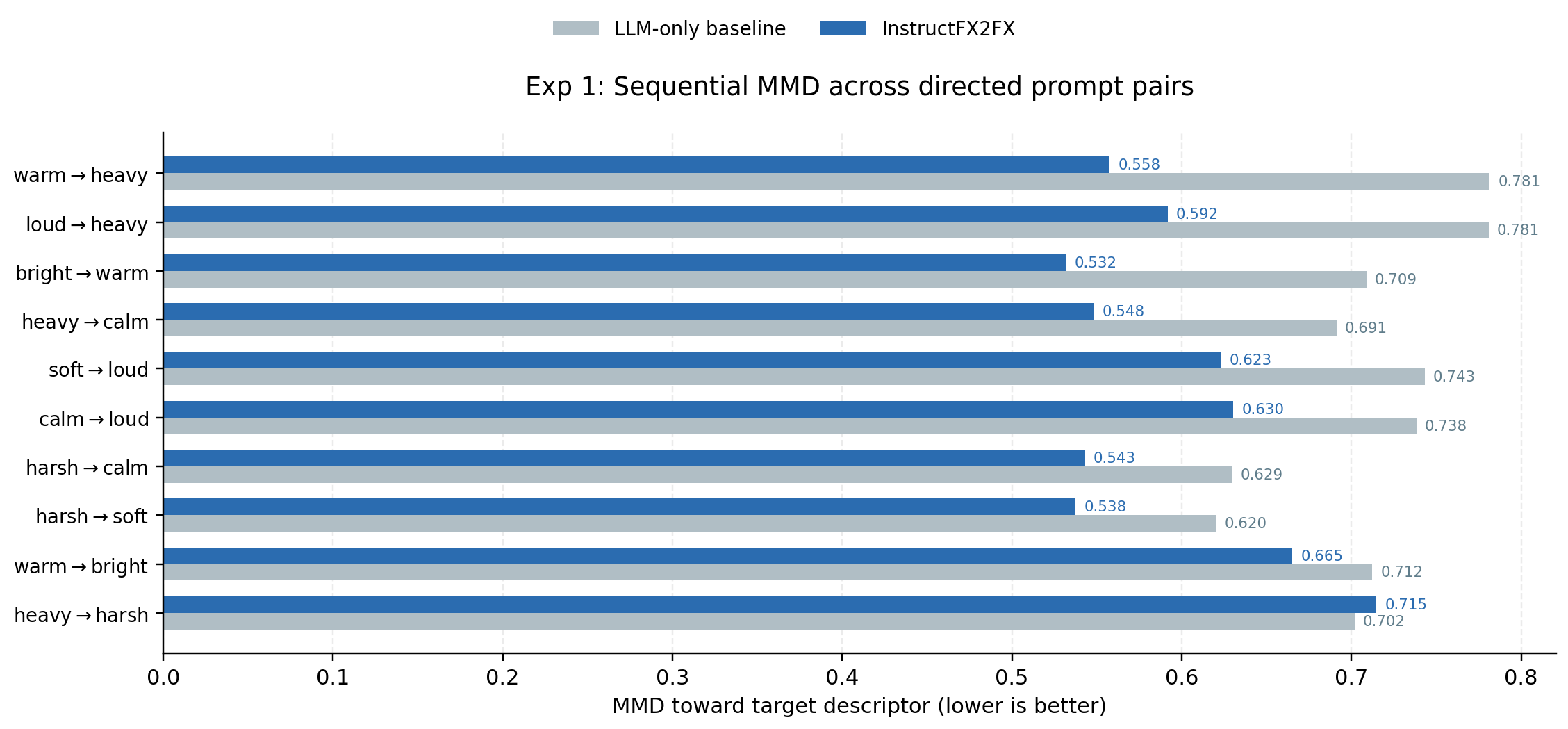

Exp 1: Sequential MMD

System output vs. sequential ground truth — lower MMD is better

MMD Comparison: InstructFX2FX vs LLM-only Baseline

90%

word pairs improved

(9 / 10)

(9 / 10)

Key Finding:

CLAP gradient descent consistently outperforms LLM-only reprompting across 9 of 10 directed word pairs.

Only heavy→harsh slightly regresses (0.715 vs 0.702).

CNMATResearch Group 22026/04/29

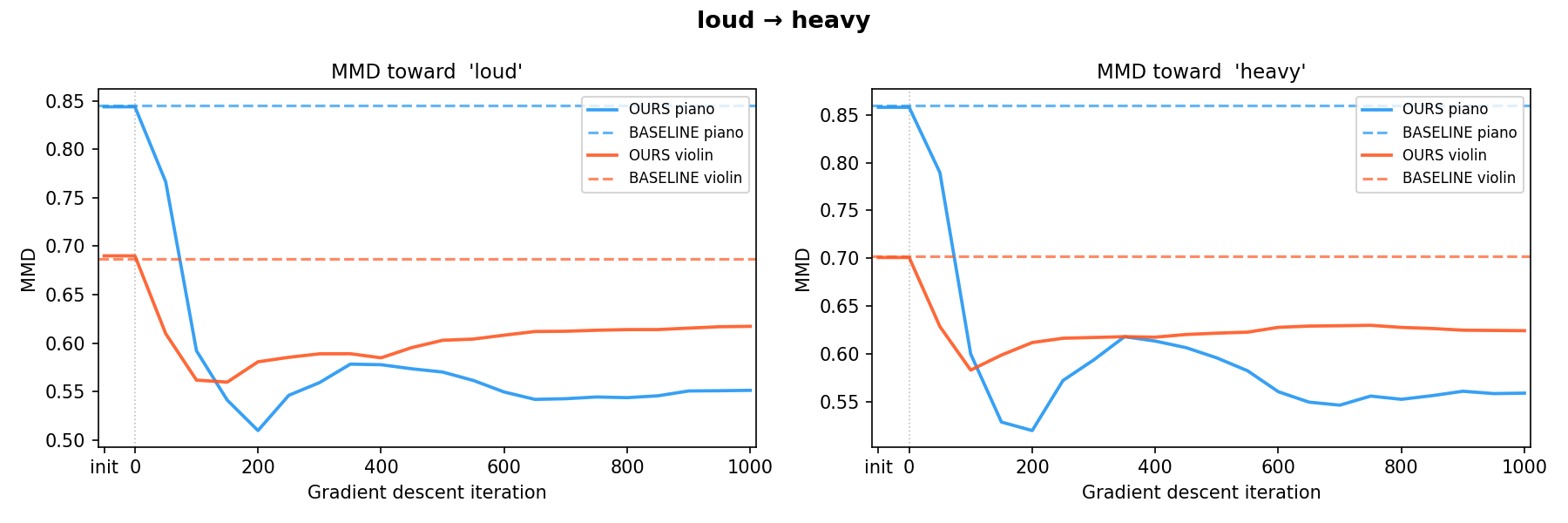

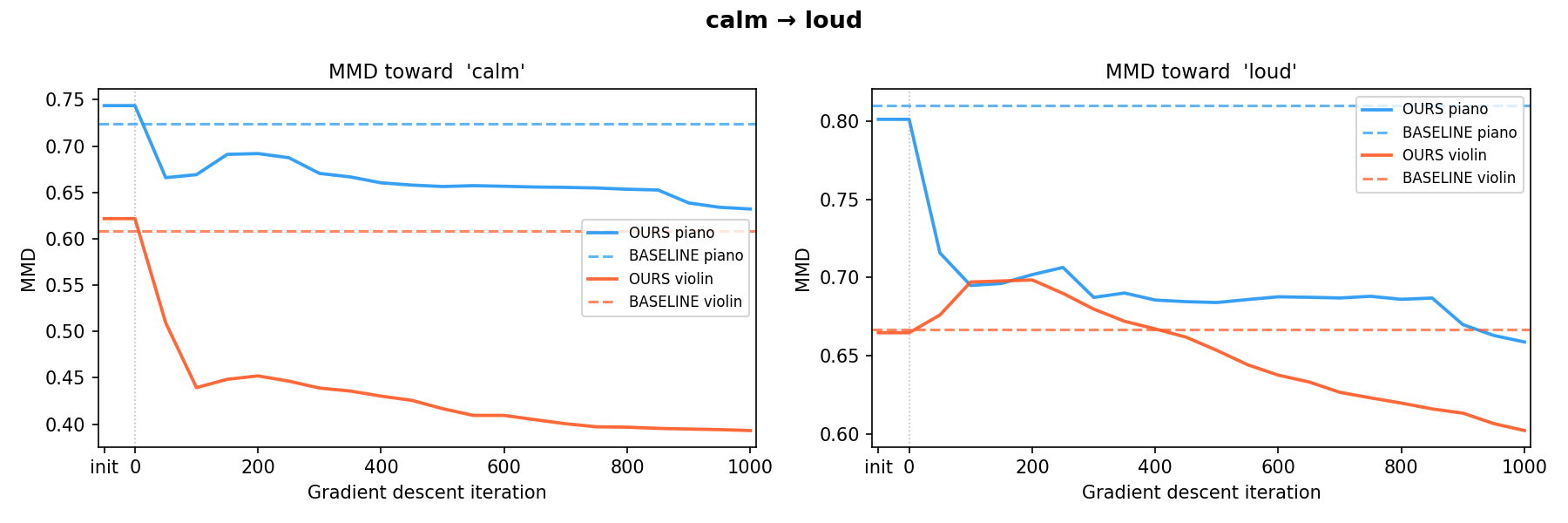

Exp 2: MMD Convergence Trajectory

Good case — loud → heavy (both instruments stay below baseline)

Messy case — calm → loud (violin: above baseline on loud panel ~0–400 iters)

What works

- Sharp drop early; often below LLM+LLM baseline

Where it struggles

- Overshoot / drift later in optimization

- CLAP objectives don’t match DSP-feature MMD

CNMATResearch Group 22026/04/29

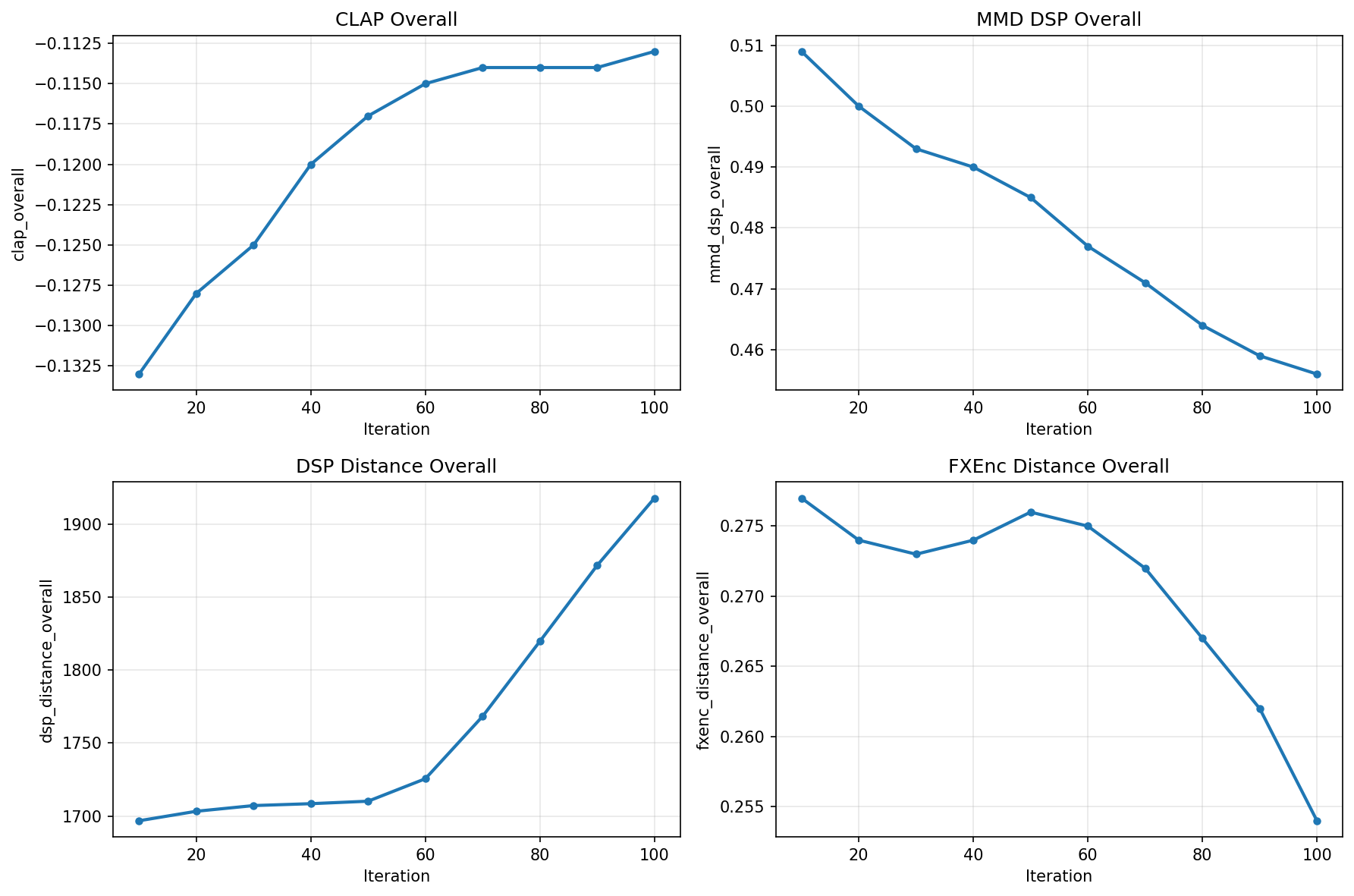

Exp 5: LLM Initialization Ablation

Does CLAP refinement actually help beyond LLM initialization? — "bright" target, piano

CLAP score, MMD, DSP & FXEnc distance across 100 optimization iterations

✓ CLAP ↑ monotonically; MMD DSP ↓ converges toward GT; FXEnc ↓ stabilizes after iter 60

⚠ DSP distance ↑ throughout — CLAP optimization and DSP features are not always aligned

CNMATResearch Group 22026/04/29

How We Got Here

Research arc: judge loops → optimization → InstructFX2FX

Phase 01

v1 Judge loop

LLM emits FX presets; separate judge scores outputs; reprompt on low score.

Roadblock

Judge fails

Noisy descriptors; no fair numeric “warm” score; LLM reprompts don’t converge.

Pivot

Reframe goal

Stop judging absolutes — move audio toward user intent.

v2 Optimize

Human-in-the-loop

Text2FX-style CLAP tuning: you listen and steer with NL feedback, not a brittle judge. One effect chain.

Now

InstructFX2FX

1. SocialFX MMD / PCA evaluation

2. harness of the architecture

3. demo website

2. harness of the architecture

3. demo website

Fall ’25

Fall ’25

Nov ’25

Dec ’25

Spring ’26

Timeline

CNMATResearch Group 22026/04/29

Limitations & Future Work

Current Limitations

- EQ-only evaluation (SocialFX dataset constraint)

- CLAP embedding space may not capture all timbral nuances

- Pedalboard effects use BO (slower, non-differentiable)

- Fixed negative anchor in guided loss

- Convergence speed: several seconds per optimization

Future Directions

- Multi-effect evaluation (comp, reverb, distortion)

- Human listening study for perceptual validation

- Fine-tuned CLAP for audio FX domain

- Real-time interactive interface

- DAW plugin integration (VST/AU)

CNMATResearch Group 22026/04/29

Thank You!

Group 2: Song-Ze Yu, Charlotte Zhang, Yuxuan Cai, Milan Liessens Dujardin

CNMAT, UC Berkeley

CNMAT, UC Berkeley

Questions?